If you can't see the source, you can't trust the insight.

A member of our team will get in touch soon

If you can't see the source, you can't trust the insight.

There's a specific kind of confidence that comes from working with AI-generated insights. The language is clear. The structure is logical. The themes feel exactly right. You present the findings to your team and it lands well.

Then someone asks: where does this come from?

And that's where things get uncomfortable.

Because with most AI tools, the honest answer is: you don't fully know. The model processed the input, found patterns in the language, and generated a summary that fits the shape of what you asked. It sounds grounded. It reads like analysis. But the link between the output and the actual customer voices that supposedly produced it is opaque at best, and nonexistent at worst.

This is not a minor technical limitation. It is a fundamental problem with how general-purpose AI handles evidence, and it has consequences that compound every time a decision gets made on top of it.

The hallucination problem nobody talks about in CX

AI hallucination gets a lot of attention when it produces obviously wrong facts, incorrect statistics, fabricated quotes, made-up sources. Those errors are easy to catch because they're verifiable.

The harder problem is the hallucination you can't see. The confident-sounding insight that is directionally plausible but not actually grounded in your data. The cluster of "delivery issues" that the model inferred from the surrounding language rather than from a genuine pattern in your feedback. The "significant increase in complaints about onboarding" that feels right because onboarding is always a topic, but doesn't map to a real spike in your actual customer signals.

These are the hallucinations that make it into presentations. Into product prioritisation sessions. Into decisions that affect roadmaps, team resources, and customer-facing changes.

And because there's no link back to the source, no way to say "here are the forty-seven support tickets, fourteen reviews, and six NPS open-text responses that produced this insight", there's no way to check. You have to trust the model. Or you have to do the verification work manually, which eliminates most of the efficiency you thought you were gaining.

The net result is a system that looks like it gives you more confidence and actually gives you less, because the confidence isn't earned by evidence, it's generated by language.

What traceability actually means

Traceability is not a feature. It's the minimum standard for any system that claims to produce actionable insight.

Every claim should be sourceable. Every pattern should be traceable to the customer voices that produced it. Every insight should come with a path back to the raw signal, not a summary of a summary, but the actual ticket, the actual review, the actual survey response where a real customer said something real.

This matters for three reasons that compound on each other.

First, it lets you verify. When an insight surfaces that seems important, you can look at the underlying data and confirm that it's real, that it's representative, and that it means what the system says it means. You're not asked to trust a black box. You're given the evidence and invited to evaluate it.

Second, it lets you act with confidence. When you take an insight to a product decision, a roadmap conversation, or a stakeholder meeting, you can show your work. Not "the AI told us customers are frustrated with billing", but "here are sixty-two customers, across these three channels, in the last thirty days, describing this specific friction point in their own words." That's the difference between a recommendation and a case.

Third, it builds institutional trust. The teams that struggle to get CX insights taken seriously by leadership are almost always working with outputs that can't be interrogated. When decision-makers can't trace a finding back to its evidence, they discount it, rationally. Traceability is what converts an insight into a credible input.

Nothing invented. Everything linked.

Zefi is built on a simple principle: every insight links back to its source. Always.

Not a representative sample. Not a reference to the general category of data it came from. The specific customer signals, the exact words, from the exact channel, at the exact moment that produced the pattern you're looking at.

This is not optional infrastructure. It's the foundation of the whole system. Because an insight you can't verify is not intelligence. It's a suggestion from a model that doesn't know the difference between what it found and what it inferred.

The question to ask of any tool you're using to understand your customers is simple: can you show me where this came from?

If the answer is anything other than a direct link to real source data, you don't have insight. You have output.

Extract value from user feedback

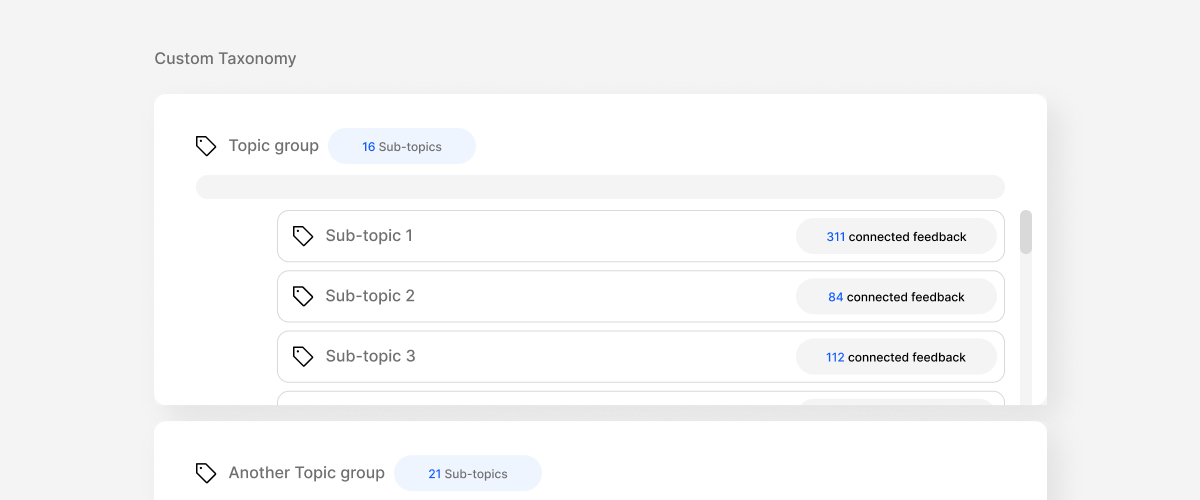

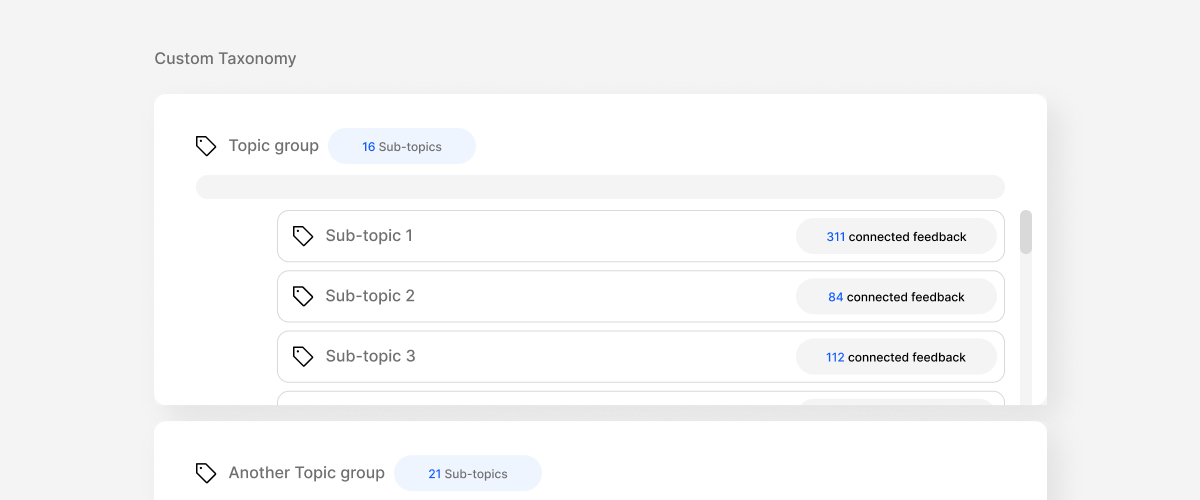

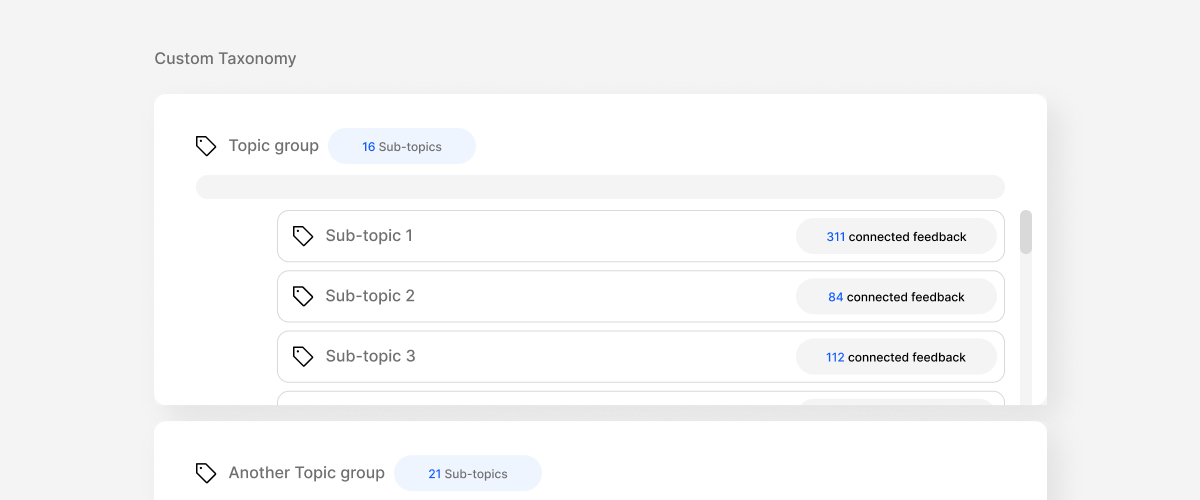

Unify and categorize all feedback automatically.

Prioritize better and build what matters.