The feedback loop is broken. Here's how to fix it.

A member of our team will get in touch soon

The feedback loop is broken

At some point in the evolution of every CX programme, the same realisation hits.

The team has worked hard to understand what customers are saying. The data is cleaner than it's ever been. The insights are sharper. The dashboard is genuinely impressive. Leadership nods along in the quarterly review. Everyone agrees that the findings are important.

And then the meeting ends. And nothing happens.

Not because the team isn't capable. Not because the insights weren't compelling. But because between "we know what the problem is" and "the customer's problem is resolved," there is a gap, and nobody built a bridge across it.

This is the loop closure problem. And it is the place where most CX programmes quietly fail, after doing everything else right.

Where AI tools stop

The efficiency case for using general-purpose AI in CX analysis is legitimate. Processing large volumes of unstructured feedback, identifying themes, generating summaries, are tasks that AI performs faster and cheaper than manual analysis. For a team trying to get from raw data to structured understanding, that's genuinely useful.

But here is where it ends: the text box.

Every AI tool of this kind is, by design, an output machine. You put signal in. You get language out. What you do with that language is entirely your problem.

The model cannot send a recovery message to the customer who left the three-star review. It cannot create a ticket in your support platform for the issue that just crossed a severity threshold. It cannot notify the product team that a specific friction point has spiked 40% in the last two weeks. It cannot trigger a workflow, update a record, or close any loop of any kind.

It produces text. You decide what to do with the text. You do the doing.

This means that every piece of insight generated by a general-purpose AI tool is, at best, an input to a manual process. And manual processes have a well-documented set of problems: they depend on the right person seeing the right information at the right time, they don't scale, they break when teams get busy, and they are invisible to anyone not directly involved.

The insight exists. The action doesn't follow automatically. The loop doesn't close. And customers (the ones who complained, who churned quietly, who sent the survey response that identified the most urgent issue of the quarter) never hear back.

The report that nobody acted on

Legacy VoC platforms solved part of this problem. They built persistence. They built history. They built dashboards that showed you trends over time rather than snapshots in isolation.

What most of them did not build was action.

The dominant product logic of enterprise VoC platforms is reporting. They are extraordinarily good at telling you what happened. They are much less good at ensuring that something happens next.

The workflow sits outside the platform. You read the report. You decide what to do. You go to your support tool, your CRM, your project management system, your email client, and you do the thing manually. The VoC platform's job is done the moment you close the dashboard.

This is a structural choice, not an oversight. These platforms were built for a world where the CX team's primary function was to inform leadership, not to drive operational change. The insight flowed upward. The decision to act flowed downward. The loop (if it's closed at all) is closed over weeks or months through organisational processes that had nothing to do with the tool.

The result is a category of software that generates enormous amounts of useful information and is systematically disconnected from the moment of action. Teams use it to build the case for change. The actual change happens somewhere else, through processes that the platform cannot see, measure, or accelerate.

And so the gap between signal and response stretches out. Customers who flagged a problem in week one get a fix in week twelve, if they get one at all. The organisations that can afford to move faster do. Everyone else manages the consequences.

The manual process dressed up as infrastructure

In-house built solutions arrive at the same destination from a different direction.

The team that builds internal CX tooling typically does so because they want control over the data, the logic, the integrations, the outputs. And in the early stages, that control is real. The system does exactly what the builder designed it to do.

What it almost never does is automate the action layer.

Building reliable, scalable workflow automation is a hard engineering problem. It requires integrations with multiple external systems, error handling for when those systems change their APIs, logic for edge cases, monitoring for failures, and ongoing maintenance as the underlying systems evolve. It is, in other words, a significant project on top of an already significant project.

So most in-house solutions stop short. They get the data in. They get the analysis running. They surface the insight to a dashboard, a report, a Slack message. And then a human takes over.

The human checks the report. Identifies the at-risk customer. Opens the CRM. Finds the record. Drafts the message. Sends it. Updates the ticket. Marks the loop as closed.

This works. It works exactly as well as the human's bandwidth allows, which means it works perfectly when things are quiet and breaks down exactly when it's most needed, when the volume of at-risk signals spikes and the team is already stretched.

Manual is not a workflow. It's a plan for when nothing is going wrong.

Closing the loop automatically

Zefi is built on the premise that insight and action are not two separate steps. They are two parts of one system, and the system isn't working until both parts are running.

This means that when a pattern crosses a threshold you've defined, a spike in negative sentiment, a surge in a specific category of complaint, a customer whose health score has dropped into at-risk territory and the response doesn't wait for someone to notice. It happens.

The right team gets notified. The at-risk customer gets a recovery touchpoint. The relevant ticket gets created in your support system. The workflow that you designed for this exact scenario runs automatically, consistently, at a speed and scale that no manual process can match.

This is what workflow automation means in a CX context. Not a fancier dashboard. Not a better report. A system that connects the moment of insight to the moment of action, without a human having to carry the handoff.

The loop closes because it's built to close. Not because someone remembered to check the report. Not because the right analyst happened to be online when the signal appeared. Because the infrastructure was designed for the whole job, not just the analysis half.

The customers who never hear back

Here's the thing about loop closure that gets lost in the operational conversation about tools and workflows.

There are customers at the other end of every unclosed loop. Customers who took the time to explain what was wrong. Who filled in the survey. Who left the review. Who sent the email. Who gave you, voluntarily and at some personal effort, the information you needed to fix the thing that was breaking their experience.

And heard nothing back.

What those customers learn from that silence is that the feedback went nowhere. That the survey was for the company's benefit, not theirs. That complaining is pointless. And they act accordingly by leaving, by telling others, by never engaging with your feedback mechanisms again.

Loop closure is not an operational efficiency story. It's a customer relationship story. The organisations that systematically close the loop (that reach back, acknowledge, fix, follow up) build a kind of trust that no marketing campaign can manufacture.

Zefi closes the loop automatically. So you never have to choose between speed and scale. And your customers never have to wonder if anyone was listening.

Extract value from user feedback

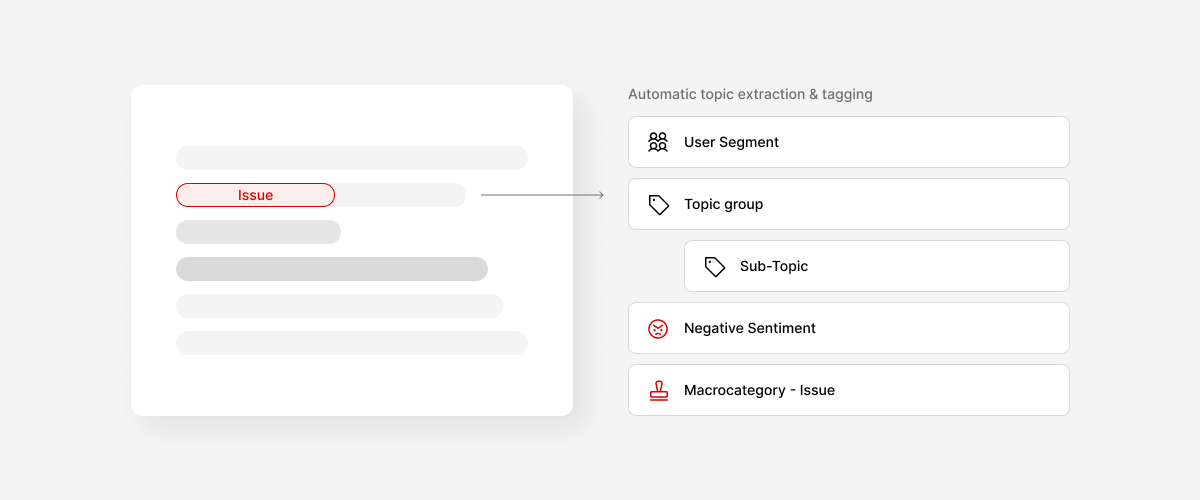

Unify and categorize all feedback automatically.

Prioritize better and build what matters.