Speed is a strategy. Most CX solutions are costing you months.

A member of our team will get in touch soon

Speed is a strategy

There's a question every CX or Product leader eventually has to answer, usually after spending a quarter trying to get a new tool working, or six months waiting for an in-house build to deliver its first output.

Why is it taking this long to simply understand what our customers are telling us?

It's not a process problem. It's not a people problem. It's an infrastructure problem. And it starts with a choice made at the beginning: which tool, platform, or approach do you bet on?

That choice has a speed consequence that most teams don't fully appreciate until they're living inside it. So let's be direct about what each path actually costs — in time, in momentum, and in the compounding gap between when a problem starts and when you finally have the information to act.

The speed illusion of generic AI

If you've experimented with ChatGPT, Claude, Gemini, or any of the general-purpose AI tools for feedback analysis, you've probably experienced the initial rush of it. You paste in a set of reviews, you ask a question, and in seconds you have a structured summary that would have taken an analyst an hour to produce.

Fast. Impressive. Genuinely useful for a one-off task.

But here's what happens at month three. You paste in the next batch of reviews. The AI has no memory of the last batch. It doesn't know what changed, what improved, what got worse. It doesn't know your taxonomy, the specific way your business categorises "payment issues" or "onboarding friction" or "feature requests." It doesn't know that the spike you're seeing in negative sentiment this week is connected to a release your engineering team pushed last Tuesday.

Every session starts from zero. Every session produces a snapshot. And a collection of disconnected snapshots is not intelligence. It's noise with better formatting.

Generic AI tools are fast to start. They are not fast to value — because value in CX requires continuity, memory, and structure that compounds over time. None of that exists in a stateless chat interface.

You haven't gained speed. You've just moved the bottleneck upstream.

The slow reality of legacy VoC platforms

At the other end of the spectrum, you have the enterprise VoC platforms. The ones with the long sales cycles, the detailed RFPs, the implementation teams, the onboarding workshops, and the six-figure annual contracts.

These platforms were built to last. And they do last, which is part of the problem. The architecture was designed for a world where insight moved at the pace of quarterly business reviews. Where a CX team's job was to produce a report, present it to leadership, and wait for the next cycle.

What they were not designed for is speed. The average enterprise VoC implementation takes three to six months before a team sees meaningful output. Some take longer. By the time the taxonomy is configured, the integrations are connected, the dashboards are set up, and the stakeholder training is done, entire product cycles have passed. Customers have churned. Issues that could have been caught in week two are now embedded in your NPS baseline.

And even after go-live, the speed of insight is a function of how the platform processes and surfaces data which, in most legacy systems, is still measured in hours or days, not seconds.

You pay for the brand. You pay for the enterprise features. You wait a very long time to find out whether any of it was worth it.

The hidden timeline of building in-house

Building your own CX data infrastructure is the choice that looks like a rational long-term investment. Full control. Custom-fit to your business. No vendor dependency.

Here is what the timeline actually looks like, based on what we've watched play out across dozens of companies who tried it.

Months one through three: scoping, architecture decisions, data pipeline design. Months four through eight: first integrations connected, initial taxonomy debated (and debated again). Months nine through twelve: MVP is live but incomplete, the person who owned the project has moved to a different team, and the backlog of improvements is growing faster than the team can address it.

In optimistic cases, you have structured, reliable signal at month twelve. In realistic cases, it's closer to eighteen. In common cases, the project is quietly deprioritised when the roadmap gets crowded and the original business case can no longer be defended to a new CFO.

Three to eighteen months. That is the range before an in-house build gives you your first meaningful, structured customer signal. During that entire window, your competitors who chose differently are operating on real-time intelligence you don't have.

And the ongoing cost is rarely modelled accurately upfront. Maintenance. Taxonomy drift. Engineer availability. Compliance requirements that weren't anticipated. The in-house build that looked like a one-time investment becomes a permanent line item with no vendor SLA to hold accountable when something breaks.

What fast actually looks like

Zefi goes live in days. Not weeks. Not months. Days.

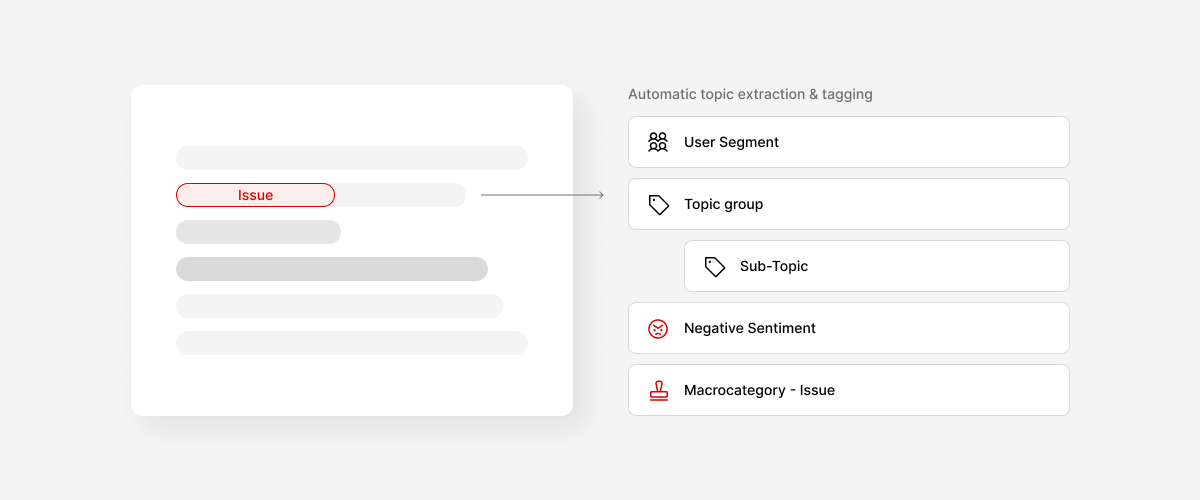

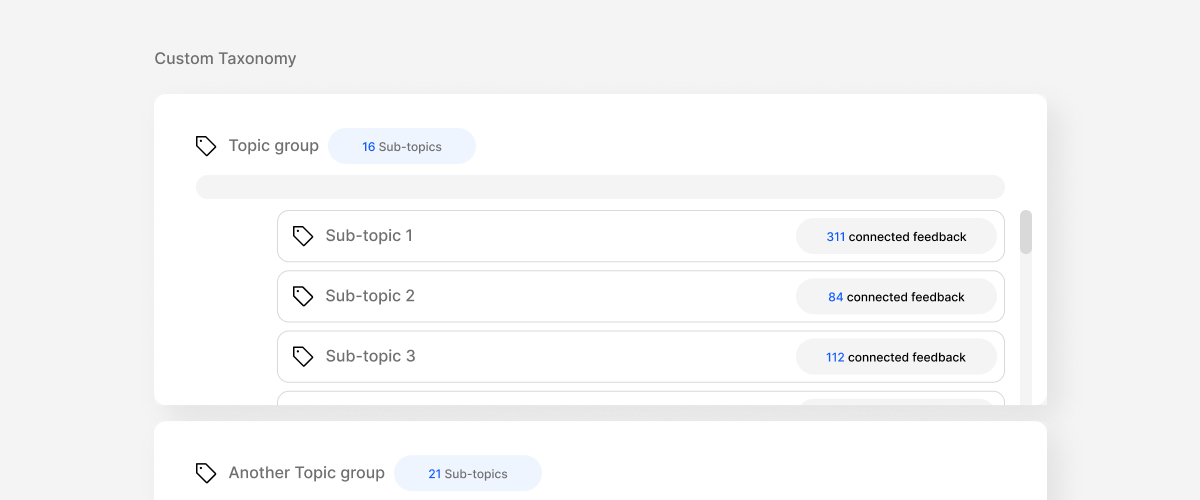

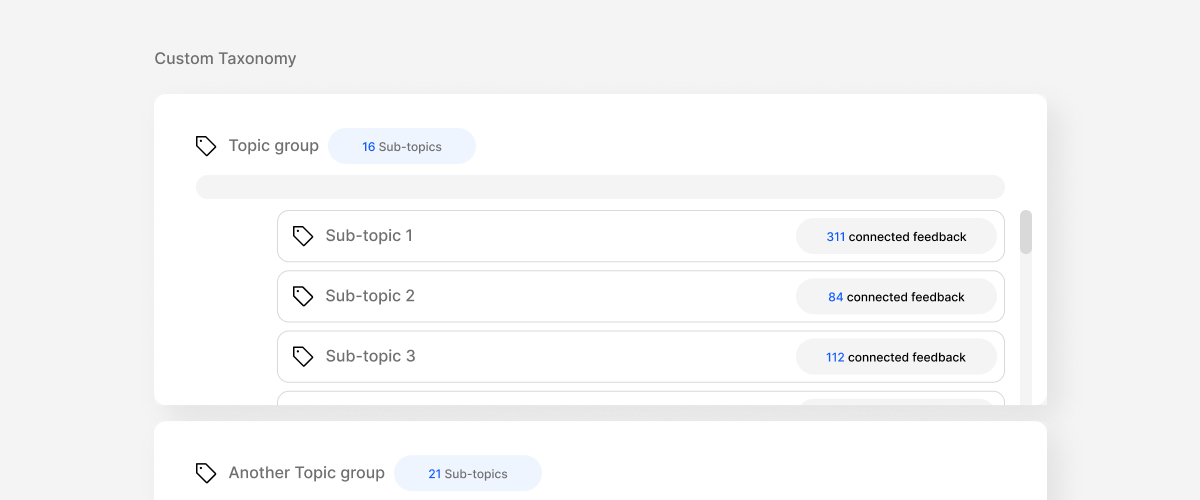

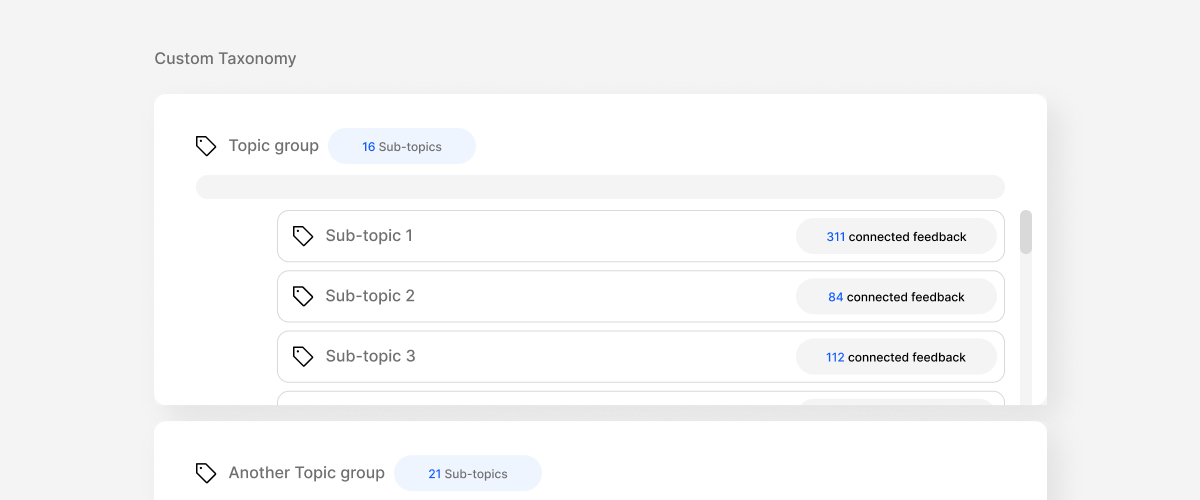

The integrations connect to your existing feedback sources like support tickets, reviews, in-app surveys, sales calls, and within hours, the system starts processing signal. The taxonomy isn't a blank canvas you have to fill from scratch; it's built from the shape of your data, guided by the expertise of a team that has done this hundreds of times, and refined to reflect the way your business actually thinks about customer experience.

By the end of the first week, you have structured, categorised, real-time insight across your feedback sources. Patterns you didn't know existed. Spikes you would have caught too late. Connections between channels that no single-source analysis would have surfaced.

And then, critically, it stays. Week two builds on week one. Month three builds on month one. The taxonomy gets sharper. The patterns get more precise. The alerts get smarter. The system compounds.

That's what separates Zefi from a fast start that goes nowhere. Every insight is anchored to a persistent, versioned, auditable structure. Every signal is traceable. Every week adds to a body of knowledge that makes the next decision faster and more accurate than the last.

This is not speed as a feature. It's speed as a structural property of the infrastructure. Built in, not bolted on.

Why the speed gap compounds

There's a reason speed matters beyond the obvious. It's not just that slow tools are frustrating. It's that the gap between when a customer problem starts and when you have the information to act on it has a cost that compounds daily.

Every day that passes before you detect a pattern is a day more customers experience the same problem. Every week without structured signal is a week your roadmap is being prioritised on assumptions instead of evidence. Every month your competitors are operating on real-time intelligence you don't have is a month they're getting faster, sharper, and harder to catch.

The teams that close this gap early don't just get to insights faster. They build a systematic advantage that is very hard to replicate from behind.

Can you afford not to do it?

The question is not whether you can afford to move fast. The question is whether you can afford not to.

Every path has a cost. Generic AI is cheap to start and expensive to sustain without structure. Legacy platforms are expensive upfront and slow to deliver. In-house builds are expensive in time, maintenance, and missed opportunity. And doing nothing — continuing to operate on manual analysis, quarterly reports, and reactive decision-making — has the highest cost of all, because it's invisible until the moment it isn't.

Zefi is built for the teams that have decided they're done waiting. Days to live. Seconds to insight. A structure that compounds from day one.

That's the speed a modern CX team deserves. It's also the speed the market is starting to demand.

Extract value from user feedback

Unify and categorize all feedback automatically.

Prioritize better and build what matters.